The rise of synthetic media—content generated or manipulated by AI, commonly known as deepfakes—presents one of the most complex technological challenges of our time. While it unlocks incredible creative potential, it also opens the door to sophisticated misinformation, fraud, and harassment. Navigating this landscape requires a multi-faceted approach combining technology, policy, and public awareness.

What is Synthetic Media?

Synthetic media is created using techniques like Generative Adversarial Networks (GANs) and diffusion models. This technology can produce hyper-realistic:

- Video and Images: Swapping faces, altering expressions, or creating entire scenes from scratch.

- Audio: Cloning voices to say things the original speaker never said.

- Text: Generating articles, emails, or social media posts that are indistinguishable from human writing.

The ease of access to these tools means that creating convincing fakes is no longer limited to Hollywood studios or state-level actors.

The Detection Challenge: A Technological Arms Race

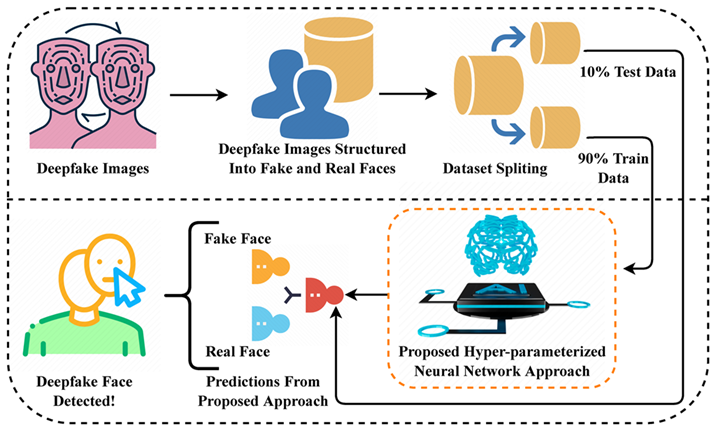

Detecting synthetic media is a constant cat-and-mouse game. As generative models improve, the artifacts that once gave them away (like unnatural blinking or blurry edges) are disappearing. Current detection methods fall into several categories:

- Behavioral Analysis: Looking for subtle, non-human cues like inconsistent head movements, odd facial tics, or unnatural emotional expressions.

- Digital Forensics: Analyzing the digital fingerprint of a file, such as inconsistencies in compression, metadata, or pixel noise patterns that differ from standard camera sensors.

- AI-Powered Detectors: Training machine learning models to recognize the patterns and artifacts left by specific generative models. However, these detectors must be constantly retrained to keep up with new generation techniques.

Here’s a conceptual Python-like example of how such a detector might work:

# A simplified example of using an AI detection model

from some_detection_library import DetectionModel

import video_utils

# 1. Load a pre-trained model that recognizes artifacts

detector = DetectionModel(model_path="synthetic_media_detector_v4.pth")

def check_video_authenticity(video_path: str) -> dict:

"""Analyzes a video and returns a probability score."""

# 2. Preprocess the video into a format the model understands

processed_video = video_utils.load_and_preprocess(video_path)

# 3. Run the detection model to get a prediction

# Returns a score from 0.0 (likely real) to 1.0 (likely synthetic)

prediction_score = detector.predict(processed_video)

is_synthetic = prediction_score > 0.85 # Using a confidence threshold

return {

"file": video_path,

"is_likely_synthetic": is_synthetic,

"confidence_score": f"{prediction_score:.2%}"

}

# Example usage:

result = check_video_authenticity("suspicious_clip.mp4")

print(result)

# Expected output: {'file': 'suspicious_clip.mp4', 'is_likely_synthetic': True, 'confidence_score': '92.17%'}

Beyond Detection: Content Provenance and Authentication

Since detection alone is not foolproof, the industry is shifting towards a proactive approach: content provenance. The goal is to create a verifiable chain of custody for digital media from the moment of its creation.

The C2PA Standard

The Coalition for Content Provenance and Authenticity (C2PA) is a leading initiative in this space. It's an open standard that allows creators to attach tamper-resistant metadata to their content. This "nutrition label" for media can show:

- Who created the content.

- What tools were used (including AI tools).

- When and where it was created.

- Any edits made since its creation.

This doesn't prevent deepfakes, but it gives consumers a reliable way to verify the authenticity of content that adheres to the standard. Tools like Adobe's Content Credentials are already implementing C2PA.

Evolving Regulations and Policy

Governments and platforms are slowly responding to the threat of malicious synthetic media. The regulatory landscape includes:

- Labeling Mandates: Requiring clear labels on AI-generated content, especially in political advertising. The EU AI Act includes such transparency provisions.

- Platform Policies: Social media companies are implementing policies to remove harmful deepfakes, though enforcement remains a challenge.

- Criminalization: Laws are being passed to criminalize the creation and distribution of non-consensual synthetic pornography and deepfakes used for fraud or election interference.

Best Practices for a Responsible Ecosystem

A truly effective strategy requires shared responsibility:

- For Creators: Use watermarking and adopt C2PA standards to certify authentic work. Clearly label any synthetic media you produce.

- For Platforms: Invest heavily in detection tools, enforce clear labeling policies, and rapidly down-rank or remove harmful, unverified content.

- For Consumers: Cultivate a healthy skepticism. Before sharing shocking content, look for provenance information, check multiple sources, and use reverse image search tools.

Conclusion

Synthetic media is here to stay. Banning the technology is neither feasible nor desirable, given its creative benefits. The path forward lies in building a robust ecosystem of trust. By combining advanced detection, verifiable content provenance, clear regulations, and widespread media literacy, we can mitigate the risks of deepfakes while harnessing the positive potential of synthetic media.

"Trust in the digital age is not about eliminating synthetic media, but about building robust systems for verifying authenticity." - Ashish Gore

If you’re interested in exploring how to integrate media auditing tools or develop policies for your organization, feel free to reach out through my contact information.