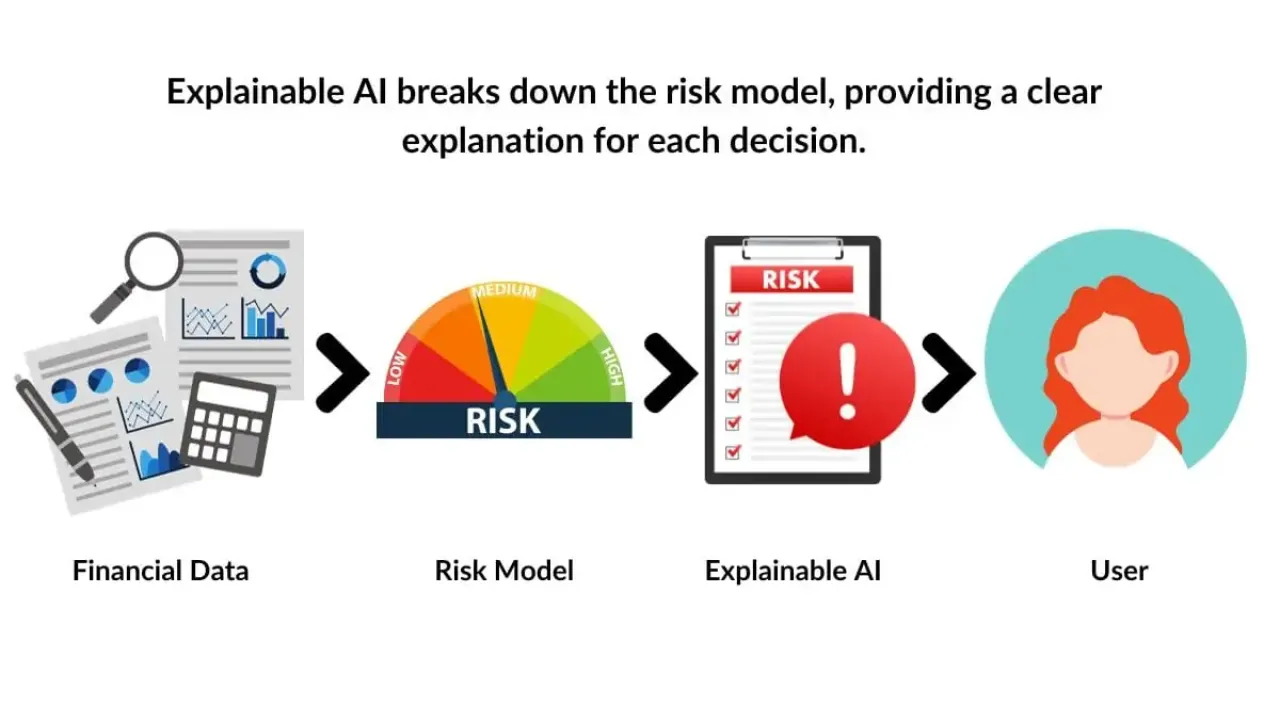

Financial services rely heavily on machine learning for decisions like credit scoring, fraud detection, and risk assessment. These decisions carry regulatory and ethical implications. This makes explainability essential. In this post, I will cover why explainability matters in finance, practical techniques such as SHAP and LIME, fairness tools, and how to integrate explainability into production systems.

Why explainability matters in finance

Financial institutions are accountable for every decision that affects customers. Regulators require transparency in automated systems. Customers demand fair and understandable outcomes. Internal stakeholders need to trust the models before adoption. Without explainability, even accurate models may not be usable.

- Regulatory compliance. Laws such as GDPR, RBI guidelines, and the EU AI Act demand explainability.

- Fairness and trust. Customers should not feel discriminated against by black-box models.

- Operational debugging. Explanations help data scientists detect bias and data drift.

Techniques for explainability

Different methods are used depending on whether you need global model-level insights or local explanations for a single prediction.

1. Global interpretability

- Feature importance. Methods such as permutation importance or Gini importance show which features drive predictions overall.

- Partial dependence plots (PDP). Show how predicted risk changes when a feature changes while others are fixed.

- Global SHAP values. Aggregated SHAP values help quantify feature impact across the dataset.

2. Local interpretability

- LIME (Local Interpretable Model-agnostic Explanations). Approximates the model locally with a simpler interpretable model.

- SHAP (SHapley Additive exPlanations). Uses concepts from cooperative game theory to fairly attribute contributions of features to a prediction.

- Counterfactual explanations. Show what minimal changes in input would flip the prediction (e.g., income increased by ₹10,000 might change loan approval).

Hands-on examples

Using SHAP for credit scoring

import shap

import xgboost as xgb

import pandas as pd

# Train model

X, y = load_credit_data()

model = xgb.XGBClassifier().fit(X, y)

# Create SHAP explainer

explainer = shap.TreeExplainer(model)

shap_values = explainer(X)

# Plot summary

shap.summary_plot(shap_values, X)This gives a global view of feature influence. For an individual applicant, you can visualize which factors increased or decreased approval probability.

Using LIME for transaction fraud

from lime.lime_tabular import LimeTabularExplainer

explainer = LimeTabularExplainer(

training_data=X_train.values,

feature_names=X_train.columns,

class_names=['Legit', 'Fraud'],

mode='classification'

)

# Explain one transaction

exp = explainer.explain_instance(X_test.iloc[0].values, model.predict_proba)

exp.show_in_notebook()LIME provides a simple explanation showing how feature changes affected the fraud probability for that specific case.

Fairness and bias tools

In finance, fairness is as critical as accuracy. Disparate impact must be monitored to ensure certain groups are not unfairly penalized.

- Fairlearn. An open-source toolkit from Microsoft for bias mitigation and fairness assessment.

- AIF360. IBM’s toolkit for measuring bias and implementing fairness-enhancing interventions.

from fairlearn.metrics import demographic_parity_difference

# Example fairness metric

dp_diff = demographic_parity_difference(y_true, y_pred, sensitive_features=X['gender'])

print("Demographic parity difference:", dp_diff)This helps quantify fairness. Smaller values indicate the model treats groups more equally.

Integrating XAI into production

Explainability should not be an afterthought. Build it into your ML lifecycle.

1. Model governance dashboards

Create dashboards that show feature importance trends, SHAP summaries, and fairness metrics. Share them with compliance teams.

2. Human-in-the-loop systems

Provide explanations alongside model outputs so loan officers or risk managers can override decisions when appropriate.

3. Continuous monitoring

Monitor drift and fairness over time. Automated alerts can trigger retraining or bias audits.

Best practices

- Balance interpretability with performance. Start with simpler models when possible.

- Document model assumptions, data sources, and limitations.

- Provide explanations in user-friendly terms, not just technical metrics.

- Use multiple methods (e.g., SHAP + counterfactuals) for a more complete picture.

Conclusion

Explainable AI is no longer optional in financial services. It is a regulatory requirement, a business enabler, and a trust builder. Tools like SHAP and LIME make explanations accessible, while fairness toolkits ensure equitable treatment. The key is to embed these techniques into the ML lifecycle, from design to deployment and monitoring. By doing so, we can build AI systems that are not just accurate, but also transparent and fair.

"In finance, a model is only as good as the trust it earns. Explainability is how we build that trust." – Ashish Gore

If you want guidance on implementing XAI for your financial ML systems, feel free to connect with me via my contact information.