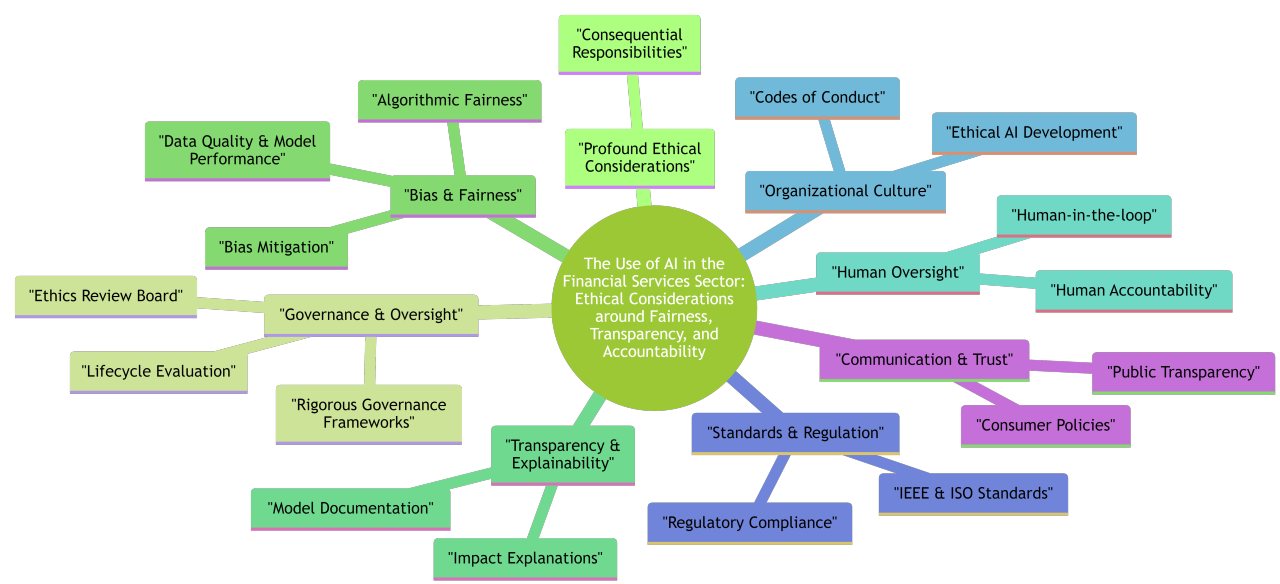

Artificial intelligence is transforming financial services — from credit scoring and fraud detection to personalized investment advice. But with great power comes responsibility. AI decisions in finance can have profound effects on individuals and society. Ensuring fairness, transparency, and accountability is critical. In this post, I’ll explore what ethical AI means in finance, the risks of neglecting it, practical frameworks for responsible adoption, and the tools available to practitioners.

Why ethics matter in financial AI

Unlike many industries, finance touches the core of people’s lives — access to credit, financial security, and trust in institutions. Biased or opaque algorithms can lead to exclusion, discrimination, or reputational harm.

- Trust and reputation. Ethical lapses damage customer trust and brand reputation.

- Regulatory risk. Global regulations (e.g., GDPR, EU AI Act, RBI guidelines) are tightening requirements for explainability and fairness.

- Market advantage. Institutions that adopt ethical AI proactively differentiate themselves as customer-centric and trustworthy.

Core principles of ethical AI

- Fairness. Ensure models do not systematically disadvantage groups based on gender, ethnicity, geography, or socio-economic status.

- Transparency. Provide understandable explanations for AI-driven decisions.

- Accountability. Define clear human ownership for AI outcomes.

- Privacy. Respect data rights and minimize unnecessary data collection.

- Robustness. Build models resilient to manipulation, adversarial attacks, and drift.

Risks of ignoring ethics

History has shown that financial systems can amplify inequality if left unchecked. Automated systems may inadvertently worsen this:

- Rejecting creditworthy individuals due to biased training data.

- Targeting vulnerable customers with predatory loan offers.

- Opaque risk scoring models leading to regulatory investigations.

Practical frameworks for responsible AI

Several frameworks can guide implementation of ethical AI in finance:

- NIST AI Risk Management Framework. Provides guidance on identifying, assessing, and mitigating AI risks.

- OECD AI Principles. Global consensus on values such as fairness and accountability.

- Internal governance charters. Many banks create AI ethics boards and review committees for oversight.

Techniques for fairness and explainability

Bias detection

from fairlearn.metrics import demographic_parity_difference

dp_diff = demographic_parity_difference(y_true, y_pred, sensitive_features=X['gender'])

print("Demographic parity difference:", dp_diff)This metric quantifies whether different groups receive similar outcomes.

Explainability

Use SHAP and LIME to explain model predictions to stakeholders:

import shap

explainer = shap.TreeExplainer(model)

shap_values = explainer(X_test)

shap.summary_plot(shap_values, X_test)Privacy protection

Differential privacy and federated learning allow model training without exposing sensitive data.

Embedding ethics into ML lifecycle

- Design. Define fairness objectives and constraints upfront.

- Data collection. Audit datasets for representativeness and hidden biases.

- Model development. Use fairness-aware algorithms and interpretability tools.

- Deployment. Provide human-in-the-loop oversight for high-impact decisions.

- Monitoring. Continuously track bias, drift, and customer feedback.

Case study: Ethical credit scoring

A bank deploying an AI credit scoring model found that approval rates were disproportionately low for younger applicants. Using fairness metrics, they adjusted feature weights and added transparency tools to explain scores. Result: improved fairness without significant accuracy loss, and stronger regulatory compliance.

Best practices checklist

- Establish an AI ethics board with diverse stakeholders.

- Adopt a clear AI ethics charter aligned with regulations.

- Audit datasets regularly for bias and representativeness.

- Provide clear, plain-language explanations for customers.

- Use technical safeguards like differential privacy where appropriate.

Conclusion

AI in finance can unlock innovation, but ethical guardrails are non-negotiable. By embedding fairness, transparency, and accountability into the ML lifecycle, financial institutions can both comply with regulation and build long-term trust. Ethical AI is not just a compliance exercise — it is a strategic advantage in building customer loyalty and resilient systems.

"Responsible AI is not about slowing innovation; it is about ensuring innovation benefits everyone." – Ashish Gore

If you’d like a practical toolkit of fairness metrics and explainability libraries tailored to financial use cases, feel free to connect with me via my contact information.